Demo: Problem solving, decision-making, and visual search in a VR Escape Room game, with multi-modal bio-sensing

Note: Click here to view these Demos in a YouTube playlist

Demos

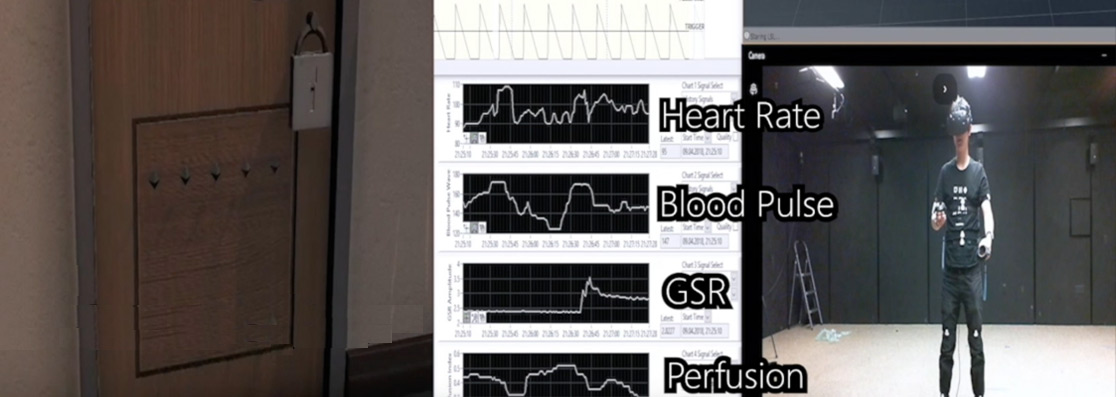

New demo: MoBI lab and Virtual Reality Escape Room

Problem solving and visual search in a VR Escape Room game: A multi-modal approach

MoBI stands for Mobile Brain/Body Imaging (Makeig et al., 2009). MoBI is a multi-modal neuroimaging modality to study the human brain under totally unconstrained conditions. The VR Escape Room utilizes multi-modal bio-sensing techniques, including Cognionics EEG headband, BioVotion Armband (with 23 physiological data streams), eye gaze tracking module, and real-time stream record to monitor the neural, physiological, and behavioral data from the freely moving participants. The escape room utilizes multi-modal bio-sensing, Cognionics EEG headband, BioVotion Armband (with 23 physiological data streams), eye gaze tracking module, and real-time stream record.

New demo: Visual search under stress

This project aims to model an ecologically valid visual search process that involves uncertainty and psychological stress during navigation of a 3D virtual environment. Jurors are instructed to scan this room to locate target bullets while avoiding similar-looking distractors. In the low-stress version of the game, 10 minutes are allotted to complete the task, while in the high-stress version, only five minutes are allowed. By recording electroencephalogram, or EEG, we can evaluate changes in cognitive processes, such as attention and memory during the search tasks. Electrocardiogram or ECG can provide information about heart rate and heart rate variability, which are related to autonomic arousal. Changes in arousal can reflect aspects of the stress response to dynamics. Eye-tracking data from the HTC Vive Pro Eye headset can reveal how patterns of scanning and fixation change as a function of stress. Because eye gaze information is synchronized with the EEG, we can compare brain response time lock to fixations on target, versus distractors.

New demo: Clinical Applications of EEG and BCIs

We have recently developed a new multi-modal apparatus and experimental protocol based on Steady-State Visual Evoke Potential based BCIs. The new multi-modal apparatus combines a head-mounted display (HMD), EEG/EOG sensors and amplifiers, an eye tracker, and motion capture. In addition, we have developed visual/auditory stimuli, signal-processing pipelines, and machine learning classifiers to evaluate the afferent and efferent visual pathways of users with Glaucoma and Multiple Sclerosis. We believe that this apparatus is capable of evaluating a wide range of neurological conditions and psychiatric disorders.

Real-time EEG Source-mapping Toolbox and its application to automatic artifact removal

Our group recently developed and tested new Matlab-based toolbox, namely Real-time EEG Source-mapping Toolbox (REST), for real-time multi-channel EEG data analysis and visualization (Pion-Tonachini, Hsu et al., EMBC 2015; Pion-Tonachini, Hsu et al., 2018). Below are video demos of the REST toolbox and its application to truly automatic EEG artifact removal

Real-time Automatic Artifact Rejection using REST 2.0

High-speed spelling with a noninvasive brain-computer interface.

Nakanishi, M., Wang, Y., Chen, X., Wang, Y.-T., Gao, X., Jung, T.-P., "Enhancing detection of SSVEPs for a high-speed brain speller using task-related component analysis," IEEE Trans. Biomed. Eng., 65 (1): 104-12, 2018.

This study proposes and evaluates a novel data-driven spatial filtering approach for enhancing steady-state visual evoked potentials (SSVEPs) detection

toward a high-speed brain-computer interface (BCI) speller.

Methods: Task-related component analysis (TRCA), which can enhance reproducibility of SSVEPs across multiple trials, was employed to improve the signal-to-noise ratio (SNR) of SSVEP signals by removing background electroencephalographic (EEG) activities. An ensemble method was further developed to integrate TRCA filters corresponding to multiple stimulation frequencies. This study conducted a comparison of BCI performance between the proposed TRCA-based method and an extended canonical correlation analysis (CCA)-based method using a 40-class SSVEP dataset recorded from 12 subjects. An online BCI speller was further implemented using a cue-guided target selection task with 20 subjects and a free-spelling task with 10 of the subjects. Results: The offline comparison results indicate that the proposed TRCA-based approach can significantly improve the classification accuracy compared with the extended CCA-based method. Furthermore, the online BCI speller achieved averaged information transfer rates (ITRs) of 325.33 ± 38.17 bits/min with the cue-guided task and 198.67 ± 50.48 bits/min with the free-spelling task.

Conclusion: This study validated the efficiency of the proposed TRCA-based method in implementing a high-speed SSVEP-based BCI. Significance: The high-speed SSVEP-based BCIs using the TRCA method have great potential for various applications in communication and control.

High-speed spelling with a noninvasive brain-computer interface. CLICK to download/watch a new video demo of a very high-speed BCI speller.

Proceedings of the National Academy of Sciences, Nov. 8, 2015.

This study reports a noninvasive brain speller that achieved a multi-fold increase in information transfer rate compared to other existing systems. Based on extremely precise coding of frequency and phase in single-trial steady-state visual evoked potentials (SSVEPs), this study developed a new joint frequency-phase modulation method and a user-specific decoding algorithm to implement synchronous modulation and demodulation of electroencephalogram (EEG). The resulting speller obtained high spelling rates up to 60 characters (12 words) per minute. The proposed methodological framework of high-speed BCI can lead to numerous applications in both patients with motor disabilities and healthy people.

BCI for drowsiness detection. CLICK to watch a new video demo of a mobile & wireless EEG system.

EEG signals are acquired by either dry EEG or commercially available EEG electrodes, miniture electronic circuits, and transmitted through Bluetooth telemetry to a cell phone. The cell phone is programed to assess fluctuations in individuals' alertness and capacity for performing cognitive tasks based on the received EEG signals.

Phone-dialing using brainwaves. CLICK to watch a new video demo of a cell-phone based brain dialer.

In the video, Yu-Te Wang, a co-investigator of this study, chooses a phone number to dial by looking at flickering numbers on a computer screen. The cell phone receives brainwaves via Bluetooth, decodes the signals associated with the selected numbers, and then places the call. This movie was filmed by another co-investigator of this study, Dr. Yijun Wang.